Autonomy

Navigation, coverage, decentralized coordination, and learned decision-making systems built for robotics environments ranging from agricultural exploration to multi-agent swarm behavior.

This domain collects autonomy-focused systems centered on policy learning, decentralized robot behavior, field coverage, and reflexive navigation. Each system is presented as a standalone engineering artifact with its own visual identity, project summary, and repository link.

Autonomy Systems Archive

Click any panel to open the corresponding GitHub repository.

AgriDroneRL

AgriDroneRL is a vision-driven reinforcement learning framework designed for autonomous drone navigation and coverage optimization over agricultural terrain. The system operates within a custom simulation environment where aerial observations are represented through a normalized vegetation index proxy derived from satellite-style imagery, enabling the agent to reason about spatial crop health distribution. Using pixel-based inputs, reinforcement learning agents including PPO, DQN, and A2C are trained to maximize field coverage through sparse first-visit rewards while avoiding redundant exploration. The environment models discrete drone motion and local observation windows, allowing policies to learn spatial exploration strategies under limited perceptual context. The training environment is built on the MicroUAV-2D simulator (check 'Platforms' tab), providing a fast and deterministic platform for aerial perception–action experimentation. Training dynamics, reward accumulation, and trajectory efficiency are analyzed to evaluate how different reinforcement learning algorithms perform under sparse-reward navigation tasks. The framework provides a controlled experimental platform for studying vision-based exploration policies, coverage planning, and reinforcement learning behavior in aerial monitoring systems for precision agriculture.

Open Repository

PPO-driven Swarm Control

PPO-Driven Swarm Control is a hybrid autonomy framework that combines reinforcement learning (Proximal-Policy Optimization) with classical multi-robot coordination principles to enable scalable aerial swarm coverage. The system first trains a Proximal Policy Optimization (PPO) agent to perform local navigation and exploration using visual observations derived from vegetation-index field maps. This learned policy is then extended to a multi-agent setting where each drone integrates the PPO action with swarm-level control components including artificial potential fields for attraction–repulsion dynamics and graph-based consensus mechanisms for coordinated dispersion. Role-switching behaviors inspired by chemical reaction networks allow agents to dynamically transition between exploration, surveying, and defensive states, enabling adaptive swarm behavior during coverage tasks. The entire system is simulated on the MicroUAV-2D simulator (check 'Platforms' tab), allowing rapid experimentation with perception-driven aerial autonomy and swarm dynamics. Experiments demonstrate that while single-agent reinforcement learning struggles to scale, the hybrid architecture stabilizes swarm behavior, reduces redundant coverage, and improves spatial exploration efficiency. The framework provides a modular platform for studying the interaction between learned navigation policies and classical swarm coordination strategies in distributed aerial robotics systems.

Open Repository

SwarmNavigator

A decentralized multi-agent reinforcement learning framework for 2D gridworld coverage and obstacle avoidance. The system studies collision-aware reward design, swarm-level exploration efficiency, and the behavioral differences between single-agent and multi-agent strategies under PPO and DQN formulations.

Open Repository

EventReflex Drone Navigation

A minimal neuromorphic autonomy study demonstrating event-based perception and spiking-inspired reflex navigation in a lightweight UAV-style environment. Instead of dense vision or learned policies, the system relies on temporal contrast, leaky integration, and fast reflex-like action selection for interpretable closed-loop behavior.

Open Repository

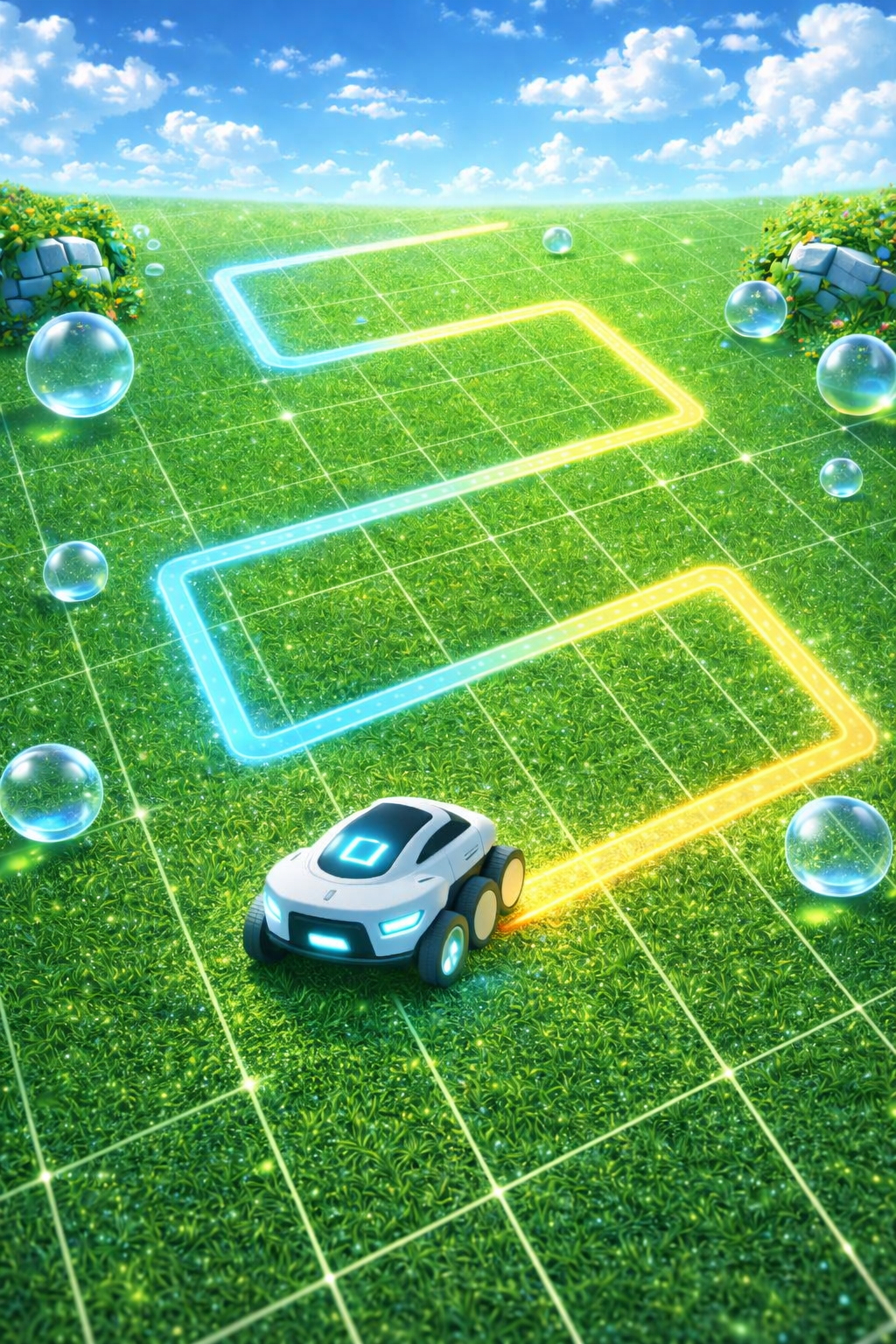

First Order Boustrophedon Navigator

First-Order Boustrophedon Navigator is a coverage path planning framework designed to achieve systematic area coverage using a classical lawnmower-style traversal strategy. The system implements a Boustrophedon motion pattern within a ROS2-based robotics pipeline, enabling structured sweeping of planar environments through alternating linear passes and controlled turning maneuvers. A proportional–derivative (PD) controller regulates lateral deviation from the reference path, minimizing cross-track error while maintaining stable forward motion along each coverage lane. The navigation stack continuously computes path-following corrections based on geometric deviation from the desired sweep trajectory, ensuring consistent spacing between adjacent coverage passes. The architecture integrates real-time telemetry collection and ROS2 topic logging, enabling quantitative evaluation of coverage efficiency, path tracking accuracy, and controller performance during traversal. Through trajectory logging and post-run analysis, the framework computes cross-track error statistics, lane tracking stability, and cumulative coverage metrics across the entire sweep sequence. The result is a reproducible experimental platform for analyzing classical coverage path planning strategies and feedback-based trajectory tracking within modern ROS2 robotic systems.

Open Repository